Brain Computer interfaces are now making the headlines of newspapers around the world. They hit the imagination making miracles possible. At the end of March the news of Bill Kockevar, a 53 year old man paralysed from his shoulders who lost control of his limbs after a bike accident, able to feed himself through the BrainGate2 interface indeed seemed like a technology miracles to the bystanders and to Bill himself (see the video clip).

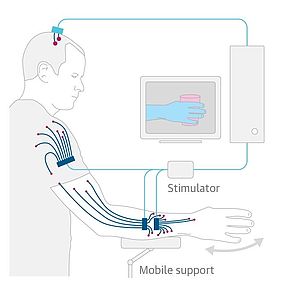

You can get the full details on how surgeons were able to restore the movement of one of his limb in one of the articles. In short the Braingate 2 chip implanted on Bill cortex captured the electrical signals generated by the brain and a computer analysed those signals to detect the willingness to perform a specific movement. This was translated into electrical signals brought to the muscles of Bill’s arm and hand that responded performing the desired action.

However, it is not really true that Bill was thinking of feeding himself and “voilà” his arm and hands moved accordingly. He had to undergo four months of training to learn what to think so that the computer could detect the appropriate electrical signals in the brain and activate the muscles in the arm and hand. This activation is not an easy feat. Muscles need to be activated in a specific order and with specific strength and this requires a lot of training on the computer side too. Bill has to look constantly at what his arm and hands are doing and change his thinking to control the computer that controls his muscles.

The whole procedure is slow but for a person that is paralysed like Bill the restoration, even partial, of functionality is a “miracle”.

There are still huge obstacles to overcome before a “brain” can directly interface with a “machine” at the physical level. We are getting better at interfacing using languages, think of Amazon Echo or Apple Siri. Also, we are now developing robots that can observe what we do and learn for it, yet another form of communication between a “brain” and a machine.

Notice, however, that in case of Bill, a voice communications would not work. Of course a computer would be perfectly able to understand the voice of Bill asking to eat a pretzel, but it won’t be able to control Bill’s muscles without the continuous supervision of Bill’s brain that seeing the way the muscles react to the computer stimulations sends signals to the computer that lead to a change of stimuli to the point that the desired movement is achieved.

Indeed, orchestrating the muscle to perform a complex movement, and picking up a pretzel and bringing it to your mouth for you to chew is a complex movement, is very hard.